CTR Suppression Intelligence Engine - Baseline-Adjusted AI Search Impact Modeling Platform_

Statistical suppression modeling system designed to quantify interface-driven CTR loss using deterministic baseline scoring, volatility modeling, and revenue impact projection.

Project Overview

AI-driven search interfaces are fundamentally changing organic traffic behavior.

AI Overviews, enriched SERP features, and zero-click experiences reduce click-through rates even when rankings remain stable.

Traditional SEO tools track:

- Position changes

- Keyword visibility

- Traffic fluctuations

They do not:

- Isolate interface-driven suppression from ranking volatility

- Model expected CTR behavior by ranking position

- Quantify revenue exposure caused by AI-induced click loss

The CTR Suppression Intelligence Engine was built to solve that gap.

It ingests exported Search Console data, constructs historical CTR baselines, applies statistical validation, models volatility-adjusted risk scores, and quantifies revenue exposure all through deterministic backend modeling.

This is not a traffic comparison dashboard. It is a suppression modeling intelligence engine.

What It Does

The system ingests:

- Google Search Console query-level CSV exports

- Query, date, CTR, impressions, and average position data

- Configurable AI rollout cutoff date

Then computes:

- Position-Normalized CTR Baseline

- Expected CTR vs Actual CTR Deviation

- Ranking Stability Filtering

- Effective CTR Suppression Score

- Statistical Significance Validation (t-test)

- Revenue Loss Estimation

- 6-Month Revenue Exposure Projection

- Intent-Level Suppression Analysis

- Volatility-Adjusted Risk Score

- High-Risk Query Detection

Every metric is derived from deterministic backend modeling the frontend only renders computed results.

No synthetic demo metrics. No client-side scoring logic.

Core Capabilities

-

Baseline-Adjusted CTR Modeling

Builds historical expected CTR curves by ranking position and applies them to post-cutoff data to isolate suppression independent of ranking movement.

-

Ranking Stability Filtering

Removes queries with significant ranking shifts to prevent false suppression attribution.

-

Statistical Validation Engine

Uses two-sample statistical testing to determine whether CTR suppression is significant rather than noise.

-

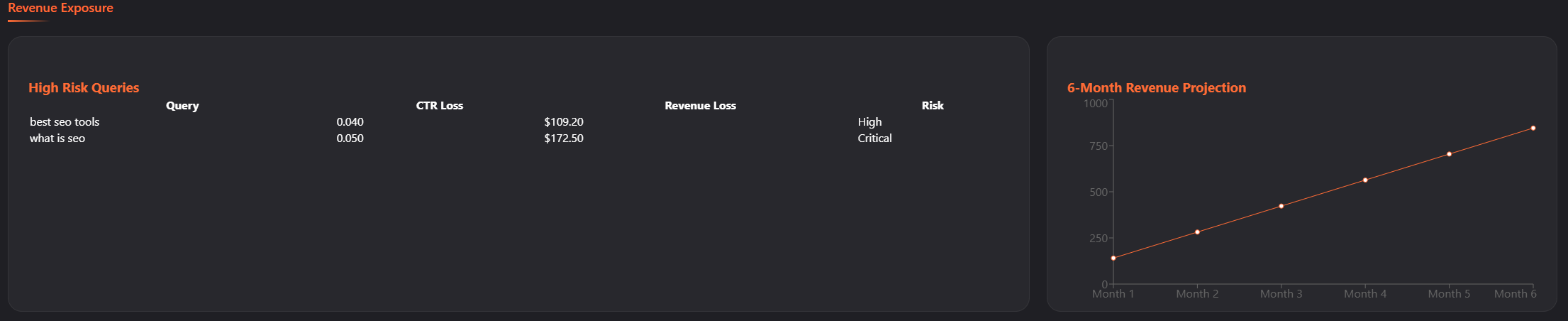

Revenue Impact Modeling

Estimates revenue loss using lost clicks, conversion rate, and average order value. Provides query-level revenue exposure.

-

Volatility Scoring

Applies rolling suppression modeling to detect CTR instability and integrate volatility into risk scoring.

-

Intent Classification Engine

Classifies queries into informational, commercial, transactional, and navigational categories, then measures suppression intensity across intent types.

-

Deterministic Risk Scoring

Combines effective CTR loss, revenue exposure, statistical significance, and volatility factor to compute a final risk score and risk level classification.

-

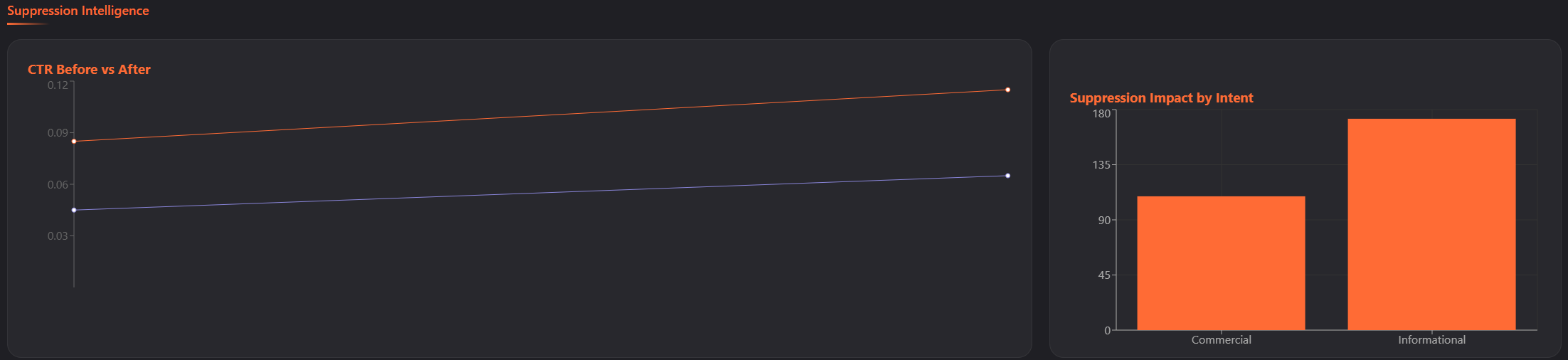

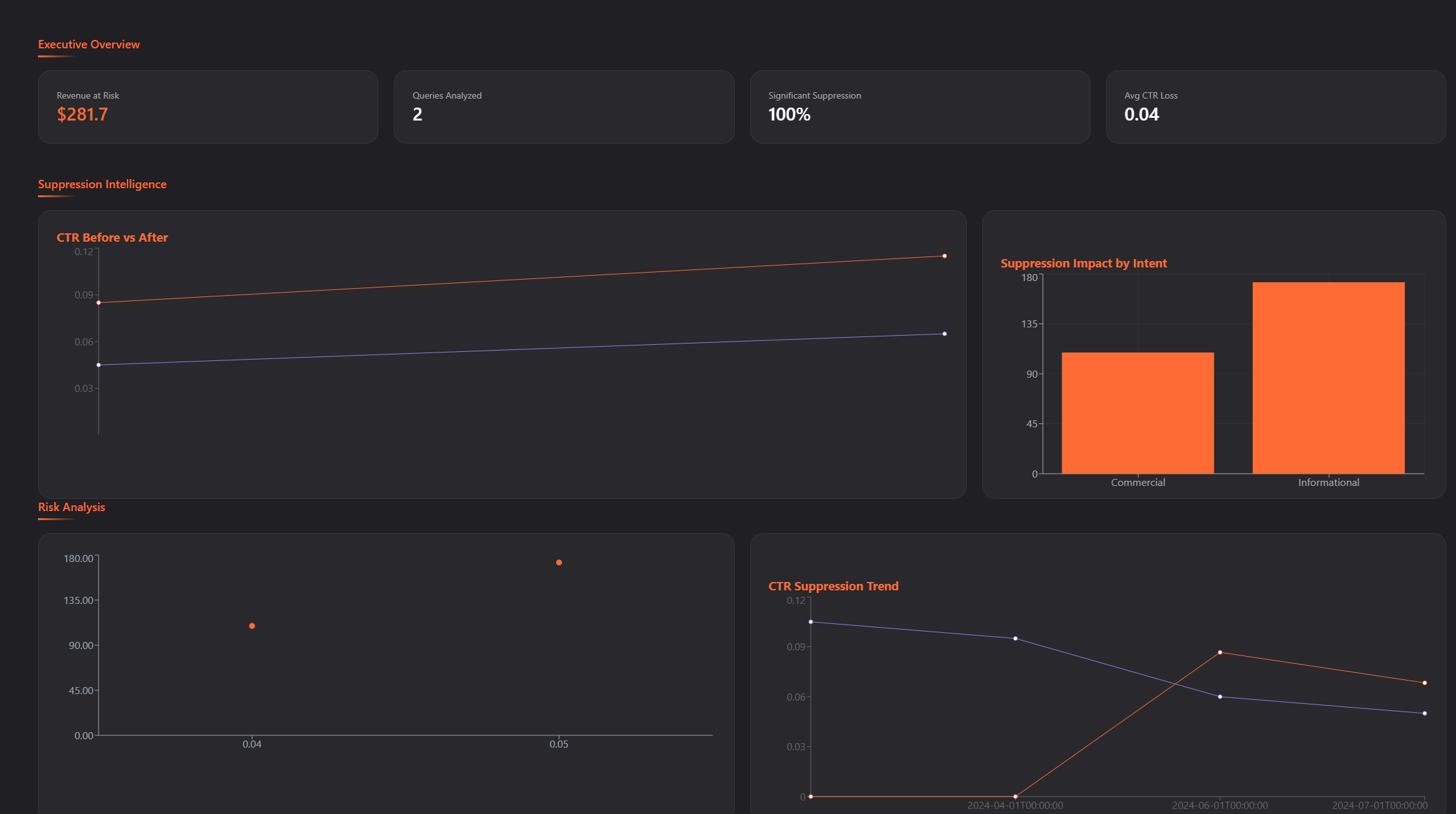

Executive Intelligence Dashboard

Visualizes revenue at risk KPIs, suppression heatmap, intent-level impact distribution, volatility trend modeling, high-risk query table, and 6-month revenue projection.

-

Local-First Architecture

Runs entirely on FastAPI + React with no external AI APIs or third-party black-box scoring services. All modeling logic is deterministic and server-side.

The Challenge

Modern search environments introduce interface-driven suppression that cannot be detected by:

- Rank tracking tools

- Visibility indexes

- Traditional SEO reporting

- Keyword position monitoring

Organic traffic declines may not correlate with ranking drops.

This creates analytical blind spots:

- Was the loss caused by ranking?

- Was it seasonal?

- Or was it interface-driven suppression?

There was no lightweight, self-hosted system capable of:

- Modeling expected CTR behavior

- Isolating suppression from rank volatility

- Quantifying revenue impact deterministically

- Integrating volatility into risk scoring

The Solution

Built a full-stack suppression intelligence engine composed of:

Backend:

- FastAPI modeling API

- Historical CTR baseline modeling

- Position-normalized expected CTR engine

- Ranking stability filter

- Statistical significance testing layer

- Volatility computation engine

- Revenue loss modeling logic

- Deterministic risk scoring system

- Structured JSON intelligence output

Frontend:

- React dashboard interface

- TypeScript strict typing

- Dark-mode executive UI

- Suppression heatmap visualization

- Intent impact bar modeling

- Volatility trend chart

- Revenue projection modeling

- High-risk query detection table

The system enforces strict backend authority risk scores and suppression metrics cannot be manipulated client-side.

Why It Matters

As AI interfaces reshape search behavior, businesses must understand:

- Which queries are structurally suppressed

- Where revenue exposure exists

- How volatility evolves post-AI rollout

- Which intent categories are most vulnerable

- Which queries represent strategic risk

This engine provides a measurable, deterministic framework for quantifying AI-driven CTR suppression.

It shifts suppression analysis from observational reporting to structured baseline modeling and statistical validation.

Future Expansion

- Direct Google Search Console API integration

- AI Overview detection simulation layer

- SERP feature exposure modeling

- Multi-domain suppression comparison

- Historical suppression tracking

- Multi-cutoff event modeling

- Automated narrative generation layer

- SaaS-ready multi-tenant architecture

- Persistent database storage

- Trend comparison between analysis runs

Project Positioning Statement

This project represents the architectural foundation for deterministic CTR suppression modeling shifting SEO analysis from rank-based reporting to baseline-adjusted, volatility-aware revenue exposure intelligence infrastructure for the AI search era.

Project Details

-

Category SEO Intelligence

-

Architecture Full-Stack

-

Year 2026